Unity Catalogue

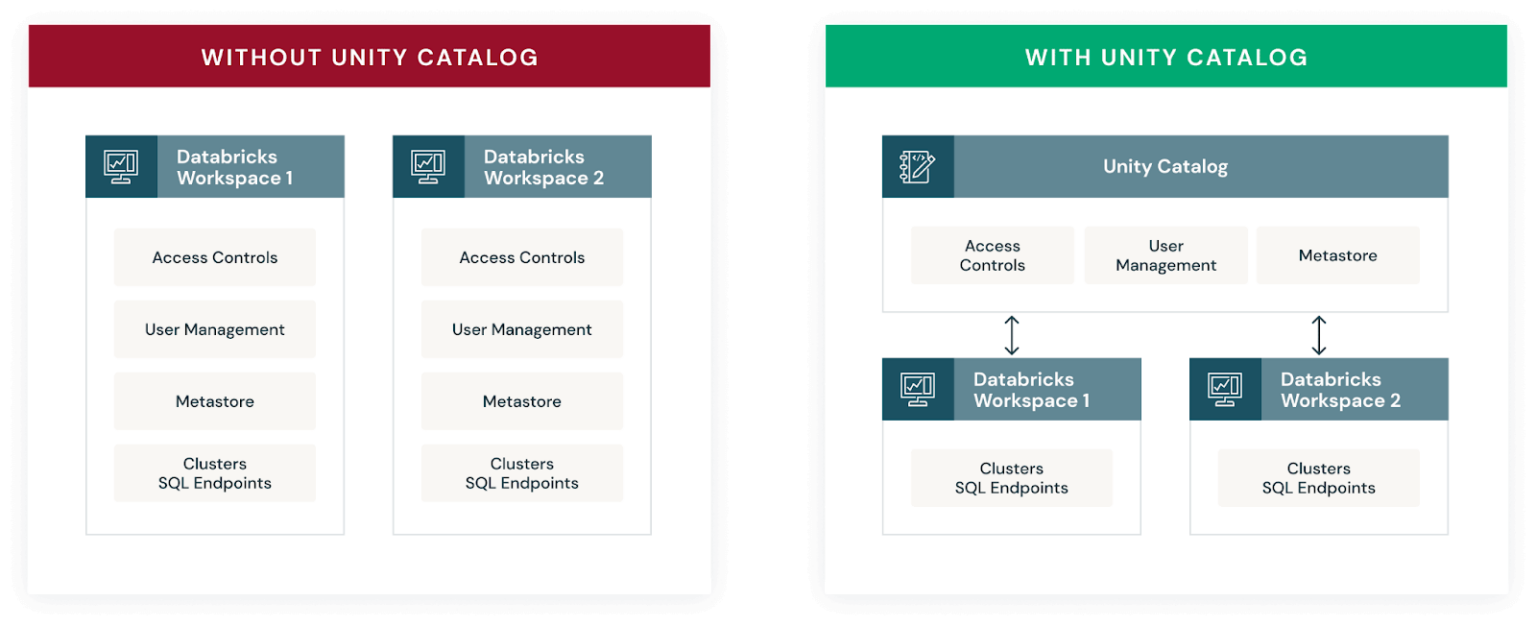

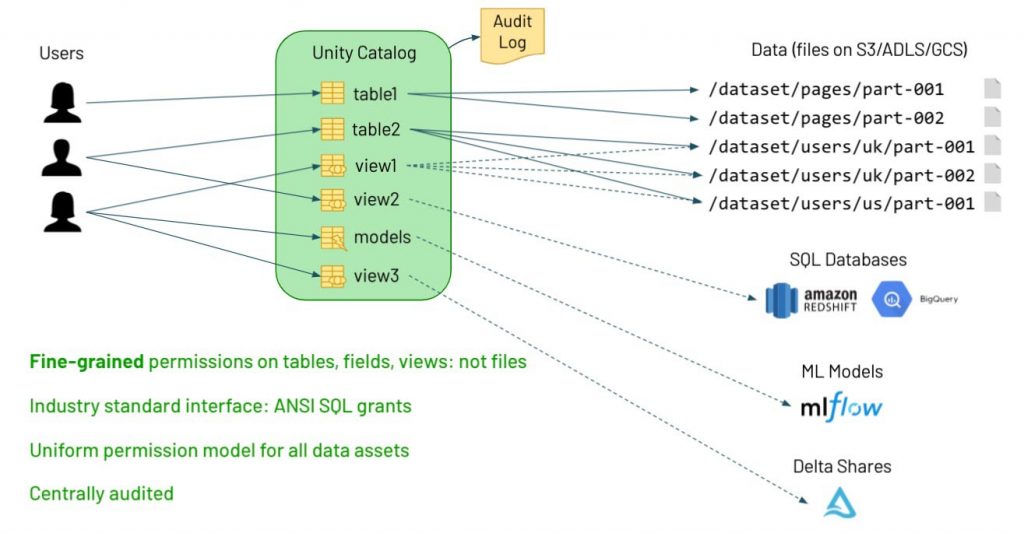

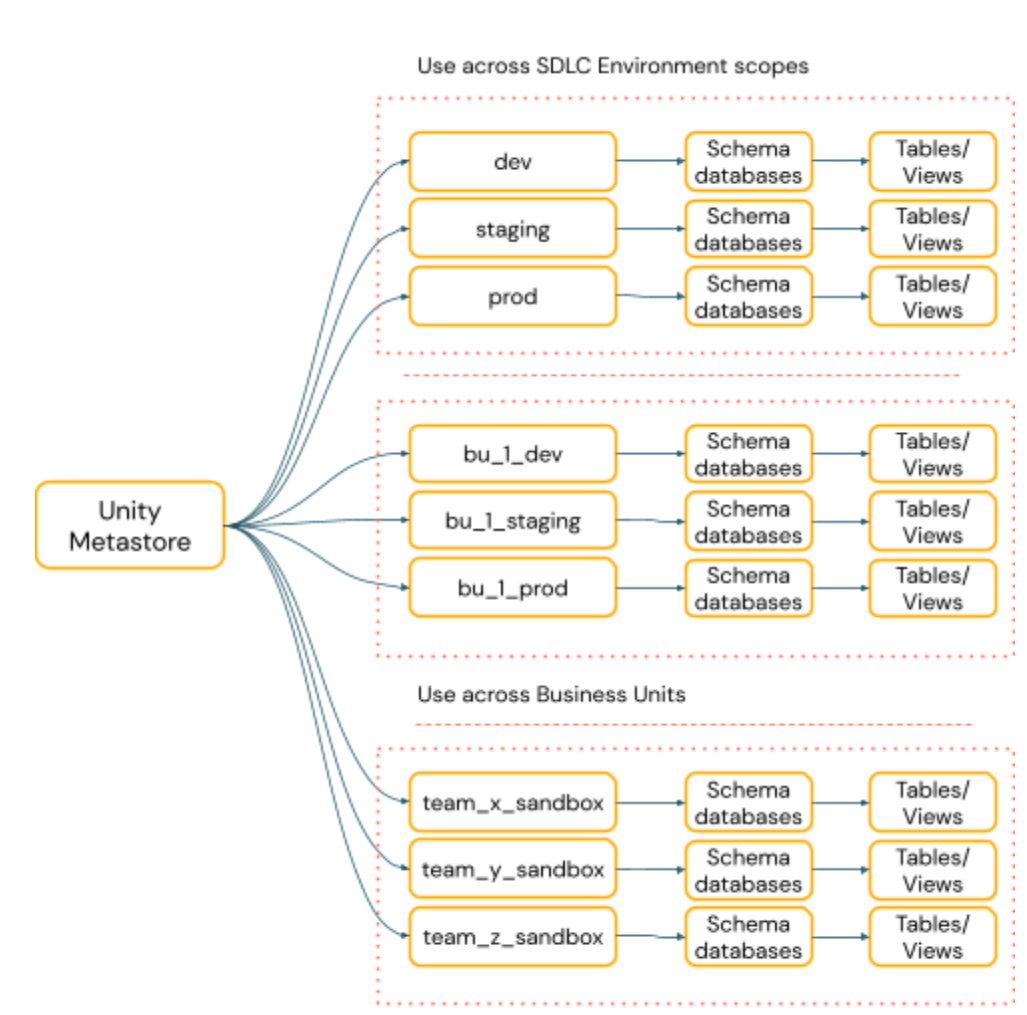

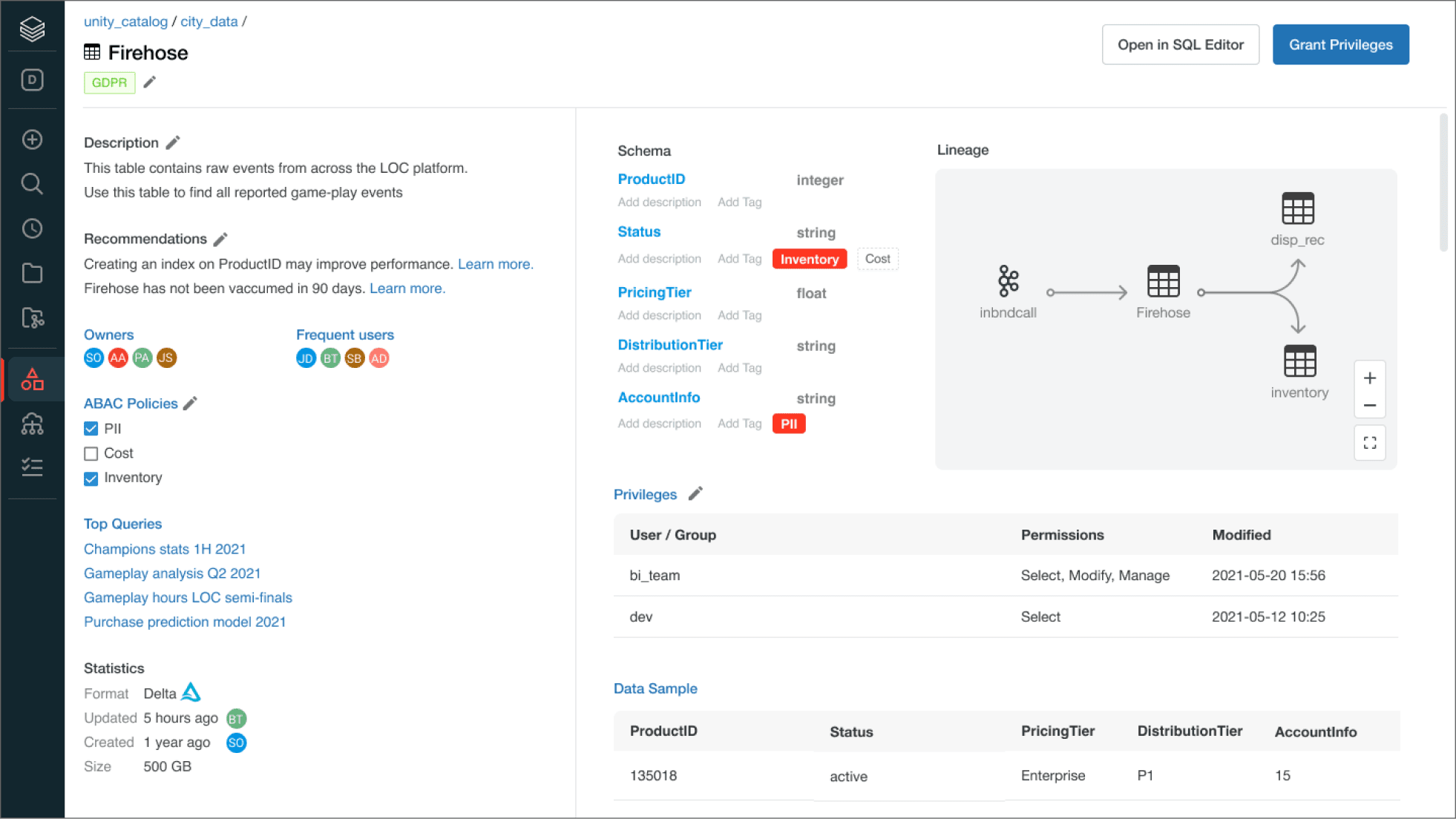

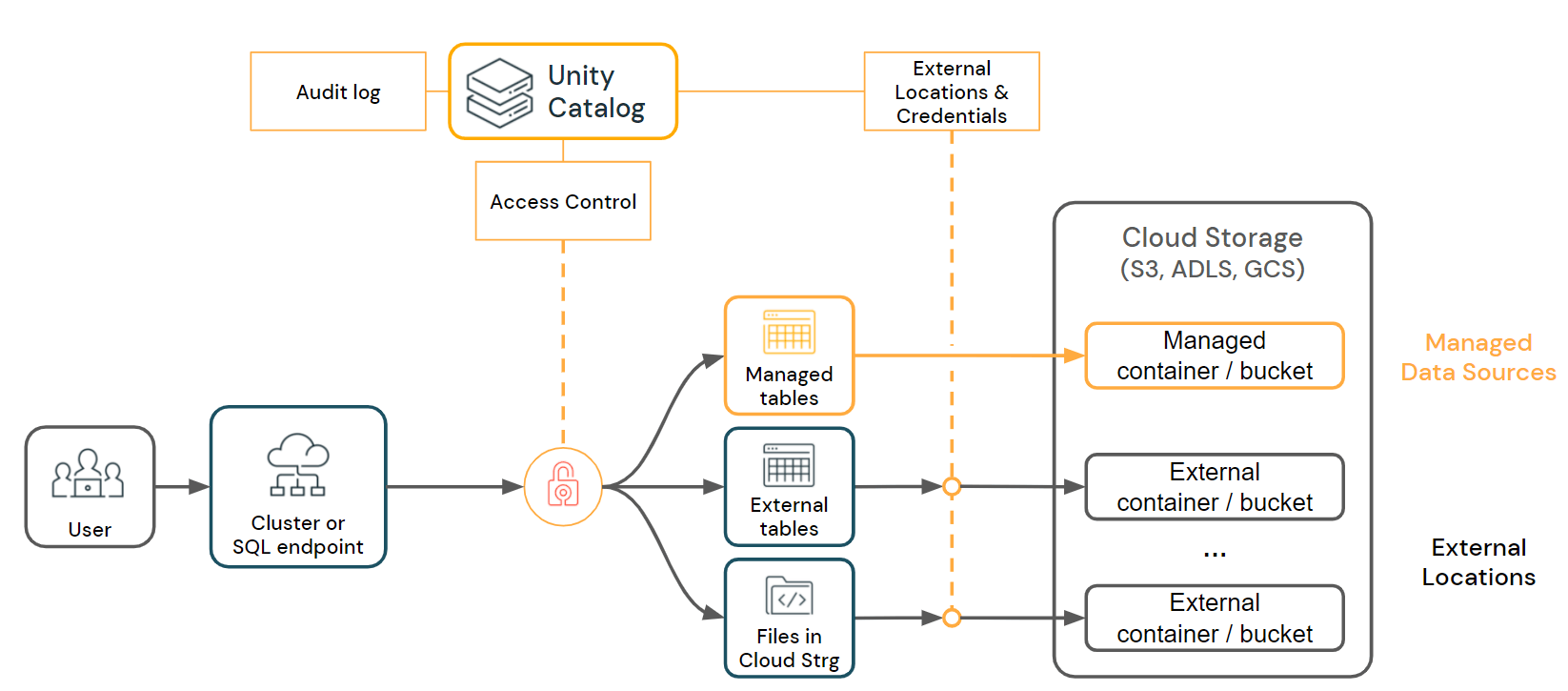

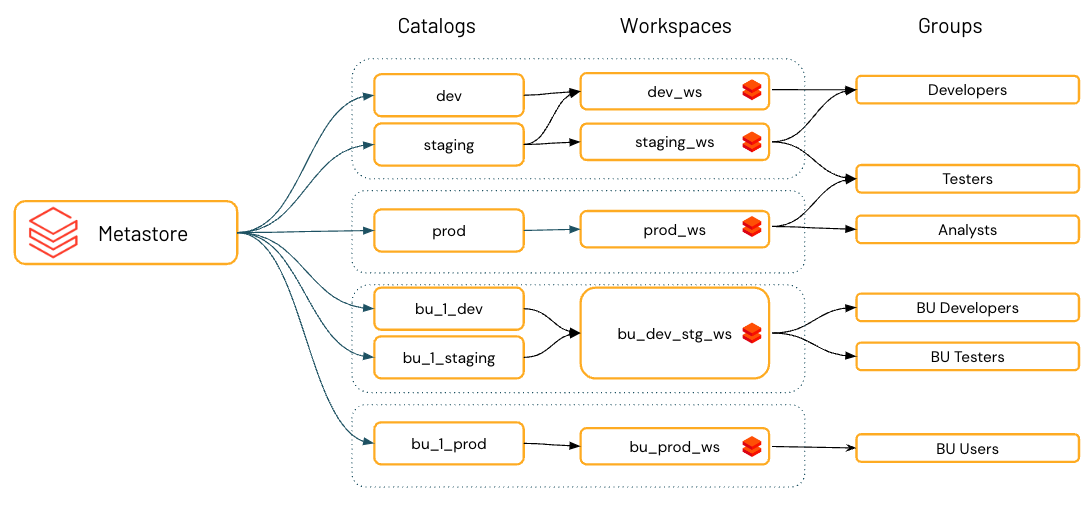

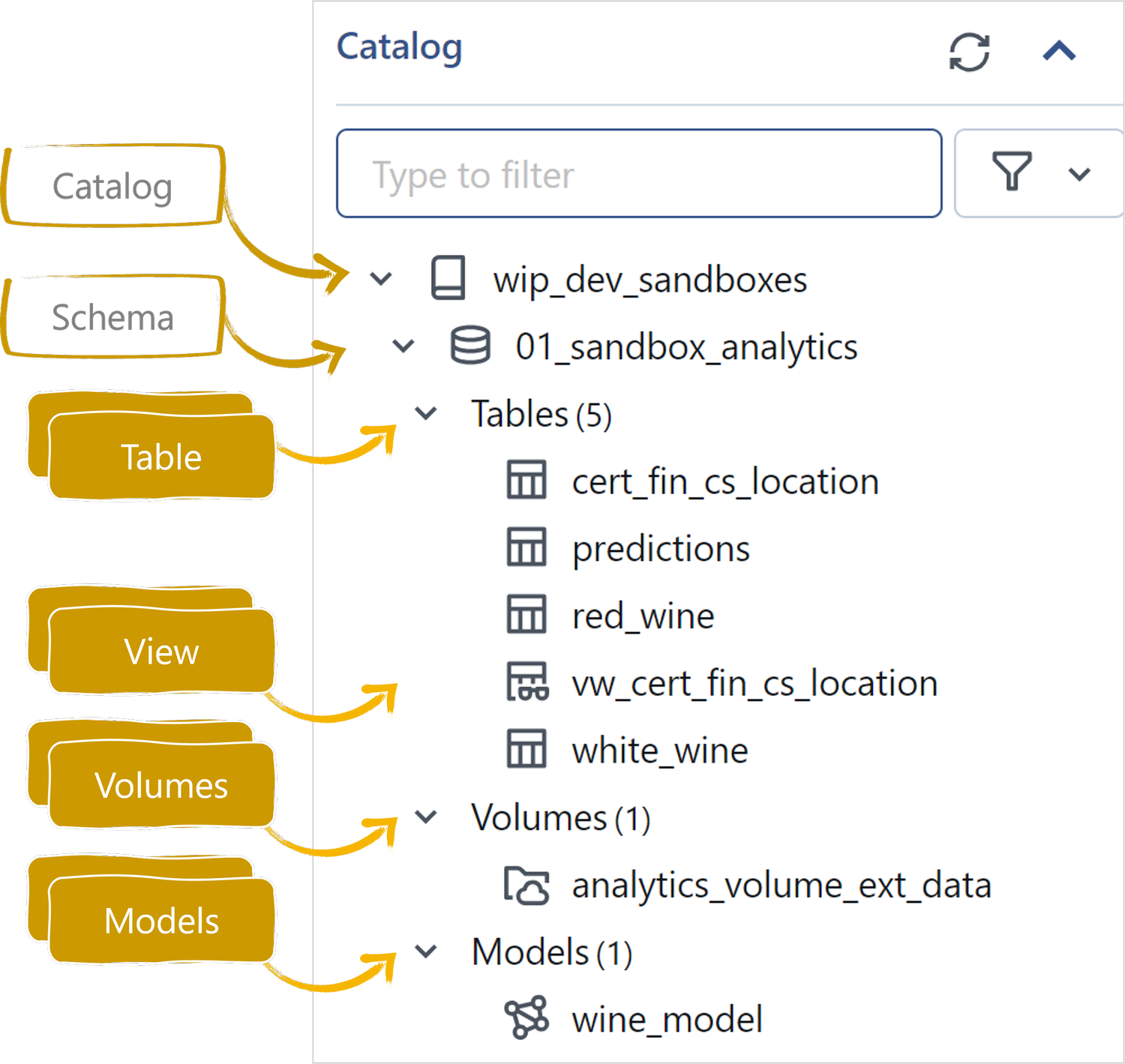

Unity Catalogue - It creates a central repository. They support all unity catalog compute (standard and dedicated clusters, sql warehouses, delta live tables (dlt) and serverless compute). This document provides recommendations for using unity catalog and delta sharing to meet your data governance needs. Follow the steps to enable your workspace, add users, and assign. The workspace must have unity catalog enabled. When you create a catalog, you’re given two options: 1.design and implement governance framework using unity catalog: Unity catalog is a comprehensive governance solution for data and ai on databricks, adding an extra layer of security for accessing data. This innovative solution leverages the power. Lead the design and implementation of a comprehensive data governance strategy using unity catalog. The typical catalog, used as the primary unit to organize your data objects in unity catalog. Tables must be registered in a unity catalog metastore. Multimodal interface supports any format, engine, and asset The workspace must have unity catalog enabled. When you create a catalog, you’re given two options: Since its launch several years ago unity catalog has. Queries must use the spark dataframe (for example, spark sql. Databricks unity catalog is a unified governance solution for all data and ai assets including files, tables, and machine learning models in your lakehouse on any cloud. You can use unity catalog to capture runtime data lineage across queries in any language executed on an azure databricks cluster or sql warehouse. 1.design and implement governance framework using unity catalog: 1.design and implement governance framework using unity catalog: This document provides recommendations for using unity catalog and delta sharing to meet your data governance needs. This innovative solution leverages the power. This blog will introduce you to how unity catalog works and how you can use it to manage your data assets. Lead the design and implementation of a comprehensive. Explore this complete guide to databricks unity catalog and learn how it simplifies data governance, security, access control, and compliance across cloud platforms. The workspace must have unity catalog enabled. Power bi semantic models can be. Unity catalog is a comprehensive governance solution for data and ai on databricks, adding an extra layer of security for accessing data. The typical. Tables must be registered in a unity catalog metastore. Learn how to manage data and ai object access, track data lineage,. Follow the steps to enable your workspace, add users, and assign. Since its launch several years ago unity catalog has. Power bi semantic models can be. Lead the design and implementation of a comprehensive data governance strategy using unity catalog. Since its launch several years ago unity catalog has. When you create a catalog, you’re given two options: They support all unity catalog compute (standard and dedicated clusters, sql warehouses, delta live tables (dlt) and serverless compute). Unity catalog is a comprehensive governance solution for data. They support all unity catalog compute (standard and dedicated clusters, sql warehouses, delta live tables (dlt) and serverless compute). The typical catalog, used as the primary unit to organize your data objects in unity catalog. This blog will introduce you to how unity catalog works and how you can use it to manage your data assets. Databricks unity catalog is. The typical catalog, used as the primary unit to organize your data objects in unity catalog. Tables must be registered in a unity catalog metastore. Unity catalog is a comprehensive governance solution for data and ai on databricks, adding an extra layer of security for accessing data. Multimodal interface supports any format, engine, and asset Power bi semantic models can. This innovative solution leverages the power. They support all unity catalog compute (standard and dedicated clusters, sql warehouses, delta live tables (dlt) and serverless compute). Follow the steps to enable your workspace, add users, and assign. Since its launch several years ago unity catalog has. Queries must use the spark dataframe (for example, spark sql. Follow the steps to enable your workspace, add users, and assign. Multimodal interface supports any format, engine, and asset This article gives an overview of the cloud storage connections that are required to work with data using unity catalog, along with information about how unity catalog governs. The workspace must have unity catalog enabled. Lead the design and implementation of. It creates a central repository. Unity catalog is a unified governance solution for data and ai assets on azure databricks. Multimodal interface supports any format, engine, and asset This article gives an overview of the cloud storage connections that are required to work with data using unity catalog, along with information about how unity catalog governs. Since its launch several. It creates a central repository. Explore this complete guide to databricks unity catalog and learn how it simplifies data governance, security, access control, and compliance across cloud platforms. 1.design and implement governance framework using unity catalog: The workspace must have unity catalog enabled. This blog will introduce you to how unity catalog works and how you can use it to. Databricks is excited to introduce the unity catalog migration solution, designed to streamline and accelerate your data and ai governance journey. We will explore unity catalog’s great features for data management, examine its. The typical catalog, used as the primary unit to organize your data objects in unity catalog. Explore this complete guide to databricks unity catalog and learn how it simplifies data governance, security, access control, and compliance across cloud platforms. 1.design and implement governance framework using unity catalog: This document provides recommendations for using unity catalog and delta sharing to meet your data governance needs. Learn how to manage data and ai object access, track data lineage,. This innovative solution leverages the power. This blog will introduce you to how unity catalog works and how you can use it to manage your data assets. This article gives an overview of the cloud storage connections that are required to work with data using unity catalog, along with information about how unity catalog governs. Power bi semantic models can be. Open, multimodal catalog for data & ai¶ unity catalog is the industry’s only universal catalog for data and ai. Tables must be registered in a unity catalog metastore. Multimodal interface supports any format, engine, and asset Queries must use the spark dataframe (for example, spark sql. You can use unity catalog to capture runtime data lineage across queries in any language executed on an azure databricks cluster or sql warehouse.Introducing Unity Catalog A Unified Governance Solution for Lakehouse

Databricks Unity Catalog — Unified governance for data, analytics and AI

Unity Catalog best practices Databricks on AWS

An Ultimate Guide to Databricks Unity Catalog — Advancing Analytics

Unity Catalog Demo Databricks

Databricks 0 a 100 [5] Unity Catalog Parte 1 Tudo que você

Introducing Unity Catalog A Unified Governance Solution for Lakehouse

Introducing Databricks Unity Catalog Finegrained Governance for Data

Unity Catalog Setup A Guide to Implementing in Databricks

Unity Catalog Databricks

Unity Catalog Is A Comprehensive Governance Solution For Data And Ai On Databricks, Adding An Extra Layer Of Security For Accessing Data.

They Support All Unity Catalog Compute (Standard And Dedicated Clusters, Sql Warehouses, Delta Live Tables (Dlt) And Serverless Compute).

Follow The Steps To Enable Your Workspace, Add Users, And Assign.

Lead The Design And Implementation Of A Comprehensive Data Governance Strategy Using Unity Catalog.

Related Post:

![Databricks 0 a 100 [5] Unity Catalog Parte 1 Tudo que você](https://static.wixstatic.com/media/a794bc_04f5b5e1467b4b20bc7b6121985a0674~mv2.png/v1/fill/w_1200,h_630,al_c/a794bc_04f5b5e1467b4b20bc7b6121985a0674~mv2.png)